At some point in every data migration, someone in leadership asks the question: "Are we ready to go live?"

In most projects, that question gets answered the same way. Someone pulls up a spreadsheet, counts the green rows, estimates the red ones are almost fixed, and says something like "pretty much." The technical lead nods. The PM updates the status deck. And everyone moves forward hoping the untested edge cases don't surface in production.

The problem is not that migration teams lack rigor. Most teams run substantial test suites. The problem is that test results live in places that have nothing to do with the migration itself — ticketing systems, shared drives, email threads, individual laptops. By the time a go/no-go meeting happens, there is no single source of truth. There is a collection of separate artifacts that someone has to manually synthesize into an answer.

Five Categories, No Gaps

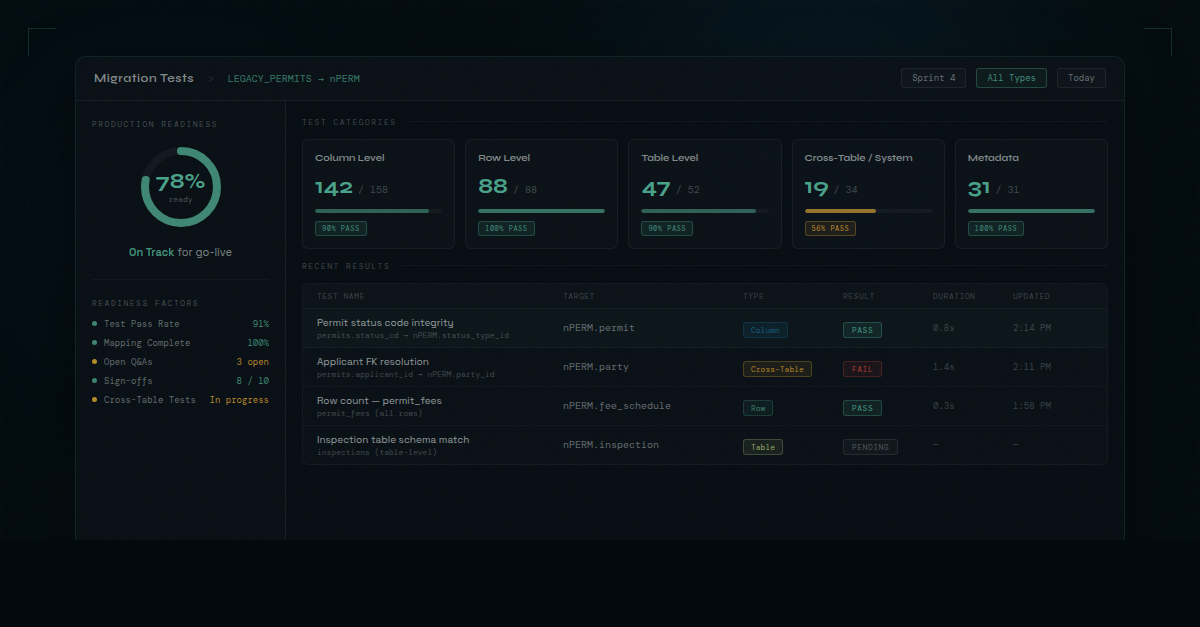

The first thing a structured migration testing practice needs is a classification system that covers the full surface area of what can go wrong. dmPro ships with five pre-defined test type categories: column level, row level, table level, cross-table/system, and metadata.

That taxonomy matters more than it might seem. When test types are undefined, teams gravitate toward the tests that are easy to run and leave gaps in the categories that are harder to automate. Cross-table consistency checks get deferred. Metadata validation gets skipped entirely until QA surfaces something strange. The gaps tend to cluster in the same places that later become production incidents.

Having all five categories defined from day one means a team can look at their test library and see exactly which areas have coverage and which are thin. Column-level tests may be well-populated. Cross-table tests may show as empty. That's a visible gap — one the team can deliberately address before testing is declared complete.

Beyond the five built-in categories, teams can define their own custom test types for scenarios specific to their legacy system or target platform. The structure is always present, but it never forces teams into categories that do not fit their context.

Tests Built From Mappings, Not From Scratch

One of the quiet costs of migration testing is the manual work of setting up test definitions. A team might spend weeks building out a mapping inventory — documenting which source columns map to which target columns, with what transformations — and then spend additional time recreating essentially the same information when configuring tests.

dmPro eliminates that duplication. When a test is defined at the column level, the system automatically references the target columns from the existing mapping. The column-by-column work that has already been done in the mapping pane feeds directly into test setup without requiring any re-entry.

Teams do have control over the targets. If a source column maps to multiple targets and a specific test only applies to one of them, that target can be removed from the test definition while leaving the mapping intact. But the default is that the work already done is already there — analysts are confirming scope, not rebuilding it.

The mapping is not just documentation — it becomes the foundation for testing. When the two are connected, the test suite reflects what was actually designed.

For table-level and cross-table tests, the same principle applies: the system references the tables from existing project structure. Test authors select the source table and the targets are pulled in from mappings. The test definition stays anchored to the migration design, not floating in a separate system.

Results That Flow In, Not Results That Get Entered

Test definitions in dmPro describe what should be tested. Actual test execution happens externally — in whatever test harness, ETL tool, or custom scripting environment the team uses. The two connect via API.

When tests run and produce results, those results flow back into dmPro in real time. Dashboard metrics update as the day progresses. A test that was failing at 9 a.m. and passes by 2 p.m. is reflected in the numbers without anyone opening a spreadsheet, copying a result, and updating a tracking document.

This matters most in the final stages of a migration, when testing is the primary daily activity and the team's attention is split across dozens of open items. In that environment, any gap between when a result is known and when it is recorded is a gap where status becomes uncertain. A PM asking for an update at 4 p.m. gets a different answer than the actual test state if someone has not gotten around to logging the afternoon's results yet.

Real-time API integration means the current state is always the recorded state. The conversation shifts from "what do you think the pass rate is?" to "here is what the dashboard shows right now."

From Test Results to Production Readiness

The final piece is where the individual test result — one column, one table, one cross-system check — connects to the larger question the organization is asking.

Test status is one of the factors applied to calculate the team's readiness for production runs. As tests pass, that signal flows into the readiness score alongside other project indicators. The dashboard does not just show a list of green and red tests. It shows a composite answer to the go/no-go question, updated in real time as results come in.

That shift — from "here are our test results" to "here is our current readiness state" — changes what a go-live decision looks like. Instead of a meeting where someone synthesizes disparate artifacts into an opinion, there is a number with a clear methodology behind it. Teams can see what is holding readiness back. Leadership can see where the project stands without waiting for a summary report.

The project that answers "are we ready?" with a data-driven, real-time readiness score is a project that has removed the guesswork from one of the most consequential decisions in the migration lifecycle. That is the kind of answer that migrations should be able to give, and that most of them currently cannot.